The tank you're picturing — heavy armor, big gun, crew of four — is only half the story of what a modern armored vehicle actually is. The other half is invisible: a dense web of vetronics systems, digital radio links, and battlefield networking software that connects every vehicle in a formation to every other one, and to commanders sitting kilometers away. Understanding battlefield networking and vetronics in modern armored vehicles is understanding how armored warfare has fundamentally changed in the last two decades. It's not about who has the biggest shell anymore. It's about who has the clearest picture.

What Vetronics Actually Means (And Why the Term Gets Misused)

"Vetronics" is a portmanteau of vehicle and electronics, and it was coined by the U.S. Army in the 1970s to describe the growing suite of electronic systems being integrated into armored platforms. Today the term covers everything from fire control computers and thermal sights to crew intercommunication systems, navigation equipment, power management units, and the onboard computing infrastructure that ties all of it together.

What it does not mean, despite how some defense journalists use it, is just "fancy electronics on a tank." Vetronics is a systems integration discipline. The challenge isn't building a good thermal camera or a good radio. It's building a vehicle architecture where all of those things share data, share power, and don't interfere with each other, while surviving the vibration, temperature swings, and electromagnetic noise of a combat environment.

A useful way to think about it: avionics is to aircraft what vetronics is to armored vehicles. The analogy holds pretty well. Both disciplines deal with sensor fusion, crew interfaces, redundancy engineering, and the fundamental tension between adding capability and adding weight and complexity.

The Architecture Under the Armor: How Vehicle Electronics Are Organized

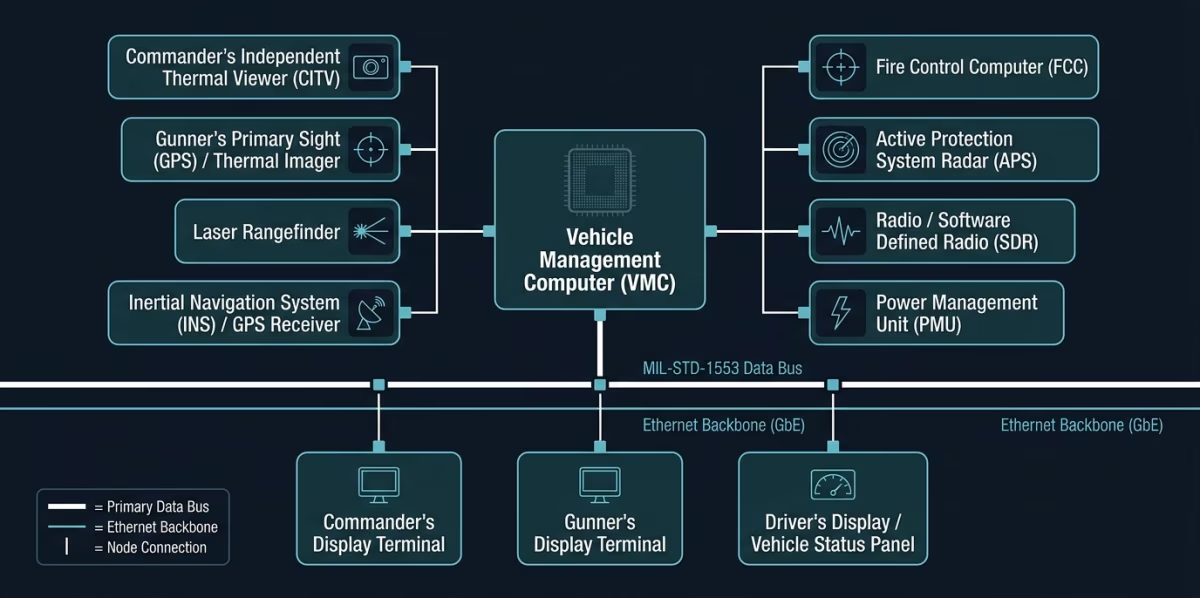

Modern armored vehicles don't wire their electronics the way a car does. Rather than running dedicated wires from each component to each other component, they use a data bus architecture. Think of a data bus as a shared highway that all onboard systems connect to and communicate over. The most common standard in Western armored vehicles is MIL-STD-1553, a serial data bus developed in the 1970s that is still widely used because of its deterministic timing and fault tolerance. Newer platforms increasingly add Ethernet-based backbones alongside it to handle the higher data throughput demanded by digital video and sensor fusion.

On top of that bus architecture sits a vehicle management computer, sometimes called a mission computer or a vetronics integration unit. This is the brain that coordinates system states, manages sensor data, handles built-in test equipment functions, and provides the crew with a unified display rather than a cockpit full of separate gauges and screens. The M1A2 SEPv3 Abrams, the Leopard 2A7, and the British Challenger 3 all follow variants of this basic architecture, though they differ significantly in implementation.

Battlefield Networking: From Isolated Vehicles to a Fighting System

A single well-equipped armored vehicle is dangerous. A company of them that can see each other's positions, share target data, and coordinate movement in real time is a fundamentally different kind of threat. That's the promise of battlefield networking, and it's the part of vetronics that has seen the most investment and the most growing pains over the last fifteen years.

The basic requirement is what the U.S. military calls situational awareness: every crew knowing where every friendly vehicle is, what threats have been identified, and what the current scheme of maneuver is. In the 1990s, this happened over voice radio, with vehicle commanders manually plotting positions on paper maps. The Blue Force Tracker system, introduced during Operation Iraqi Freedom, was an early attempt to automate this. Each vehicle broadcast its GPS position over a satellite datalink, and every other vehicle with the system could see friendly icons on a digital map display.

Blue Force Tracker was transformative, but it was also slow, satellite-dependent, and it only moved position data. Modern systems have to move targets, threat assessments, fire missions, and logistics status simultaneously.

The current generation of battlefield networking for armored forces in NATO armies runs on systems like the U.S. Army's Joint Battle Command-Platform (JBC-P), which replaced Blue Force Tracker and added voice, data, and mission command applications over a waveform that works across multiple radio types. The British Army uses Bowman, now being replaced by the next-generation Morpheus program. Germany's armored forces have relied on the SEM 90 family of radios integrated into their FENNEK and Leopard platforms, with the newer Software Defined Radio suite providing waveform flexibility that older hardware simply couldn't offer.

What matters most for a crew isn't the brand name of the system. It's what that system actually delivers at the vehicle level. A good battlefield network for an armored formation needs to do four things reliably: share position data with low latency, distribute target information across the formation before the target moves, allow commanders to push updated orders without requiring voice radio calls, and do all of this in an environment where the enemy is actively jamming and direction-finding your emissions. That last part is where a lot of programs have struggled.

If you're researching this topic for a procurement or capability analysis context, the NATO C2 Systems Working Group publishes architecture standards worth reading alongside the platform-specific documentation. Understanding where a system conforms to coalition interoperability standards versus where it's proprietary tells you a lot about how useful it actually is in a combined-arms or multinational operation.

The Sensors That Feed the Network

A battlefield network is only as useful as the data going into it. For armored vehicles, the primary sensors that generate that data are thermal imaging systems, laser rangefinders, radar (on some platforms), and increasingly, acoustic and seismic sensors that can detect vehicle movement at distance. The integration of these sensors into a common operating picture is one of the harder engineering problems in modern vetronics.

The commander's independent thermal viewer, fitted to the M1A2 and several other modern main battle tanks, is a good example of how sensor integration changes tactical options. In older tanks, the commander had to look through the same sight as the gunner to designate targets. With an independent thermal viewer slaved to the fire control system, a commander can be scanning for new threats while the gunner is engaging the current one. That "hunter-killer" capability seems like a small detail until you think about how much time the average engagement window lasts in a high-tempo fight.

Radar integration is still relatively rare on main battle tanks but is becoming more common on infantry fighting vehicles and reconnaissance platforms. The Israeli Namer heavy IFV and several variants of the CV90 now carry active protection system radars that detect incoming threats and trigger countermeasures. Those radar tracks can, in principle, be shared across the formation's data network, giving other vehicles warning before they're in the engagement envelope themselves.

Active Protection Systems and Their Role in the Vetronics Picture

Active protection systems (APS) deserve their own mention because they sit at the intersection of sensors, computing, and networking in an interesting way. Systems like Trophy (Israel), Arena-M (Russia), and IRON FIST (also Israel) all use their own onboard radar and fire control computers to detect and intercept incoming anti-tank munitions. They operate at millisecond timescales that no human crew can match.

What's more relevant to battlefield networking is what happens after an APS intercepts a threat. On platforms where the APS is properly integrated into the vetronics architecture, the intercept event generates data: direction of attack, approximate origin point, munition type estimate. That data, pushed across the formation network, gives other vehicles and dismounted forces a heads-up about likely threat positions. In practice, the integration between APS systems and battlefield networking software is still immature on most platforms. The hardware does its job. Getting the data out of it and into the common operating picture in a useful time frame is still a work in progress.

| Platform | APS System | Network Integration Status |

|---|---|---|

| M1A2 SEPv3 (USA) | Trophy HV | Partial - intercept data logged, limited real-time network push |

| Merkava Mk 4M (Israel) | Trophy | Most mature - developed alongside C2 architecture |

| CV90 (various) | Iron Fist or AHEAD | Varies by nation; some have network-integrated configurations |

| T-14 Armata (Russia) | Afganit | Full integration; limited operational data available |

What Ukraine Has Taught the World About Armored Networking in Combat

The conflict in Ukraine has produced more real-world data about armored vehicle performance in high-intensity warfare than the previous two decades of counterinsurgency combined. Several things about battlefield networking and vetronics have been validated or challenged by what's happened there.

First, the value of networked situational awareness has been confirmed dramatically. Ukrainian forces equipped with Western systems, including M113s and various IFVs with digital datalinks, have consistently reported that knowing where friendly forces are in real time reduces fratricide and allows faster reaction to enemy movements. This isn't surprising to anyone who has followed capability development, but it's now empirically demonstrated rather than theoretically argued.

Second, emissions control has become a survival skill, not an operational nicety. Russian forces learned early in the conflict to direction-find active radio emissions and target vehicles that are transmitting. Ukrainian crews have had to learn when to transmit and when to go dark, which creates a direct tension with battlefield networking that assumes continuous communication. The solution that's emerging is burst transmission and frequency-hopping waveforms that make direction-finding harder, but this requires more sophisticated radio hardware than older platforms carry.

You can have the best battlefield network in the world. If the enemy can find you by your transmissions, you've just built yourself a very expensive target.

Third, the integration of commercial and military systems has proven more effective than many expected. Ukrainian forces have used Starlink terminals, commercial tablets running apps like DELTA (Ukraine's own battlefield management system), and military radios together in ways that bypassed some of the limitations of purely military networking hardware. That improvisational integration is influencing how Western armies are thinking about open architecture standards for future programs.

The Standards and Architectures That Matter for Interoperability

If you're trying to understand how different nations' armored vehicles actually connect to each other in a coalition operation, the starting point is NATO's STANAG 4527, which defines a common interface for vehicle-to-vehicle data exchange. Below that sits the challenge of getting different national systems to actually speak the same data formats, which is harder than it sounds when each country has procured systems from different vendors with different data schemas.

The U.S. Army's approach to this has been the Generic Vehicle Architecture (GVA), a program aimed at defining standard interfaces for vetronics systems so that components from different vendors can be swapped or upgraded without rebuilding the entire integration. The British Army has a parallel program, also called GVA but defined under DEF STAN 23-09. The overlap in naming is somewhat unfortunate; the architectures share principles but are not the same standard.

What both approaches recognize is that vetronics integration has historically been a vendor lock-in problem. If your fire control computer, your navigation system, and your radio are all proprietary and talk to each other through custom interfaces, upgrading any one of them requires re-engineering all the connections. Open architecture standards are meant to solve this, though in practice the transition from older proprietary systems is slow and expensive.

Where Battlefield Networking and Vetronics Are Heading

The near-term direction is clear from what's already in development. Software-defined radios are replacing hardware-defined ones, which means waveform upgrades can be pushed as software updates rather than requiring physical hardware swaps. Artificial intelligence is being integrated into sensor fusion layers to reduce the cognitive load on crews who are already managing more information than any previous generation of armored vehicle operators. And unmanned systems are being added to formation networks, with armored vehicles acting as relay nodes and mission commanders for loitering munitions and ground robots.

The longer-term challenge is managing the electromagnetic signature problem. As armored vehicles become more capable and more networked, they also become more electromagnetically active, which makes them easier to detect and target. Solving that tension, between the situational awareness benefit of networking and the survivability cost of emissions, is probably the defining engineering problem for the next generation of armored vehicle development.

A useful exercise if you're tracking this space is to look at which programs are investing in low-probability-of-intercept and low-probability-of-detection waveforms versus those that are still treating radio emissions as an acceptable signature. That distinction tells you a lot about how seriously a program takes the threat environment they're designing for.

What This All Means for Understanding Modern Armored Warfare

The physical platform still matters. Armor, firepower, and mobility are not going away as the basic performance parameters of an armored vehicle. But the competitive advantage in armored warfare has shifted toward information dominance. The vehicle that sees first, shares that information fastest, and acts on it before the enemy can react is the vehicle that wins the engagement, regardless of whether it has a bigger gun.

Battlefield networking and vetronics are the systems that make that possible. They're not add-ons or nice-to-haves. On any modern armored platform worth the name, they're as fundamental as the engine. If you want to understand why the M1A2 SEPv3 is a fundamentally different fighting machine from the original M1A1, or why the Leopard 2A7 is not just a Leopard 2 with a new paint job, the answer lives in the vetronics and networking upgrades, not the gun.

If this is a topic you're researching seriously, the best next step is to get into the technical specifications for one platform in depth rather than staying at the survey level. Pick a vehicle you're interested in, find its program documentation or the relevant defense industry publications, and trace exactly how its vetronics architecture is organized. That ground-level understanding transfers to every other platform you look at afterward. If you want a starting point, our breakdown of the M1A2 SEPv3's integration architecture goes into that level of detail.